Avahi exposes back-end model to Amazon SageMaker while also strengthening the security posture of data exchanges containing sensitive information

THE CHALLENGE

EyeGage, a start-up company based in Atlanta, planned to go to market with its mobile application that uses machine learning models to detect recent drug/alcohol use from a simple scan of the eye. A key requirement was getting the front-end app and the models to work with Amazon SageMaker—the back-end cloud platform that enables app developers to create, train, and deploy machine-learning models.

“Our prototype worked well in AWS, and our models were already trained,” says Dr. LaVonda Brown, Founder & CEO. “But we needed help in exposing the models to SageMaker to ensure we could scale our services as user activity spikes.”

EyeGage first attempted to expose the machine-learning models by using internal resources. But without prior experience with SageMaker, working through the documentation proved difficult. As a participant in the AWS Impact Accelerator Program, which focuses on Black founders in the technology sector, Brown was then able to tap into personalized coaching and capital funding.

THE SOLUTION

AWS referred EyeGage to Avahi. “Avahi immediately impressed us with their knowledge about machine learning models and their understanding of our business,” says Brown. “More importantly, they presented previous SageMaker projects they had taken on that were similar to what we needed. That gave us confidence Avahi could do the job.”

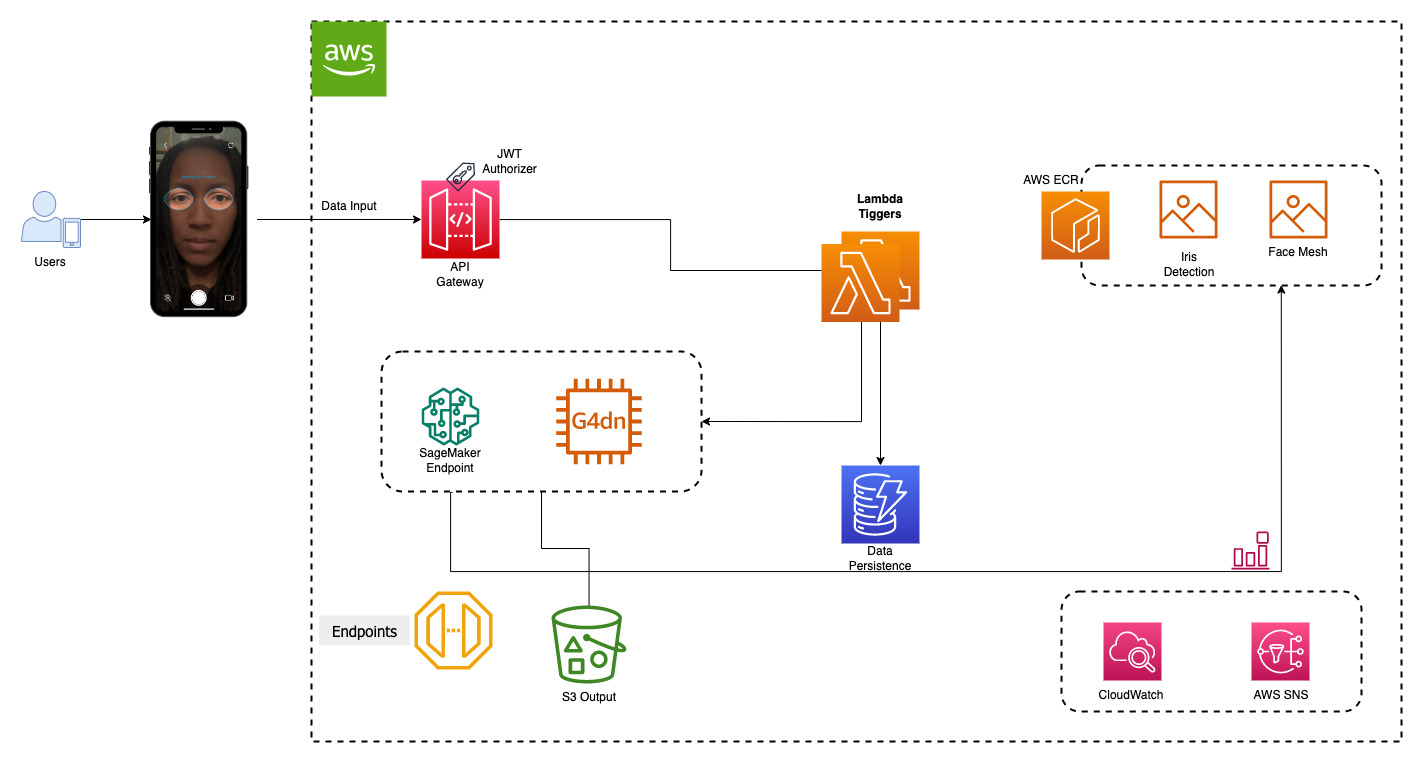

Avahi layered the code of the machine-learning models in SageMaker to expose them as a seamless API to end-users of the EyeGage mobile app. This included updating the models’ code so it can be exposed as an event-driven machine learning inference. Avahi also layered the models with additional services like AWS Lambda for serverless computing and Amazon API Gateway, a managed service that makes it easy to create and maintain APIs.

RESULTS

In addition to SageMaker exposing the machine-learning models so the front-end drug and alcohol screening app could scale as it sends eye images and receives scan results, the programming applied by Avahi streamlines the comparison of current and past scanning results. This will allow EyeGage to provide users with additional valuable information about their condition.

“Avahi also helped us understand all the AWS resources we are using, and they encoded the AWS back-end to receive JSON web tokens,” adds Brown. “This gives us a more secure way to send data back and forth, which is critical given the sensitivity of the information we process for our customers.”

The work completed by Avahi helped EyeGage prepare for investor demo sessions, which are critical for helping the company launch operations. Brown says EyeGage plans to make a free version of the app available to consumers during Q4 of 2022. EyeGage will also develop a version for businesses to screen employees and customers that is expected to be available by the end of Q1 in 2023.

“By partnering with Avahi, we were able to accelerate the timeline to get our mobile app ready for our investor demos, and we are now confident the app will scale well when we go to market. We know we can also turn to Avahi again for helping us integrate with AWS services as we continue to enhance our application.” – Dr. LaVonda Brown, Founder & CEO

CUSTOMER PROFILE

EyeGage allows workplaces and individuals to stay ahead of accidents with confidence—quickly and accurately screening for substances prior to operating life-threatening equipment like cars, cranes, and scalpels. EyeGage can be used to detect classes of substances such as alcohol, amphetamines, benzodiazepines, opioids, and marijuana because eyes look and behave differently when under the influence of these substances.

KEY TECHNOLOGIES

- Amazon SageMaker

- Machine Learning

- JSON Web Tokens

KEY RESULTS

- Exposes mobile app eye scans to back-end machine learning models and returns results

- Allows app to perform for investor demos

- Enables app to scale when workload spikes occur

- Improves the security posture of the app

- Sets the stage for the app to go to market

Architecture Diagram